I’ve spent last decade “behind the curtain” building innovative solutions for customers and companies and have seen how Managed Service Providers (MSPs) and Managed Security Service Providers (MSSPs) operate their businesses. Profit margins for managed services companies are thin, and competition is fierce. Lackluster customer onboarding and management won’t set you apart as a rival service and scaling services without hiring egregious engineering resources is challenging. Kubernetes and DevOps principles can revolutionize Managed Services by increasing margins and speeding time to delivery.

Having spent the last year re-architecting, optimizing, and extending the functionality of the Cribl Sandbox, I have realized the untapped power of Kubernetes. The Cribl Sandbox platform runs on AWS EKS, permitting the flexibility of running multi-tenant workloads securely, yet cost-effectively.

In my research, I read–many–articles–about–the–future of technology being Kubernetes and the future of Kubernetes itself, but the articles regarding the use of Kubernetes for Managed Services are sparse. . . cue this blog.

Why Kubernetes?

Kubernetes provides a competitive advantage due to its flexibility, running almost anywhere, and standardized way of managing deployed resources. Tell Kubernetes what to do and the control plane reconciles it for you.

Kubernetes also unlocks resource efficiency through application containerization. Deploy the required resources and scale up or down as demand fluctuates. Like VMware revolutionized bare metal server resource utilization in the early aughts, Kubernetes is doing the same for applications today.

Containers #FTW

Containerization is excellent for the Cloud. Companies can leverage Cloud Service Providers (CSPs) like Amazon Web Services’ Elastic Kubernetes Service, Microsoft Azure’s Kubernetes Service, and Google Cloud’s Kubernetes Engine. However, these services come with a premium price tag and organizations are beginning to reevaluate cloud strategies or are outright shifting away from the cloud due to costs.

The X (f.k.a. Twitter) Engineering team announced in 2023 they drastically reduced their monthly cloud spend by migrating object storage to an on-premise model.

“ Among the changes we made was a shift of all media/blob artifacts out of the cloud, which reduced our overall cloud data storage size by 60%, and separately, we succeeded in reducing cloud data processing costs by 75%.”

Politics aside, this is an impactful statement regarding cloud usage, given that our customers follow a similar service utilization pattern.

The Solution: Nesting Matryoshkas🪆

So, back to Managed Services… Utilizing the cloud exclusively for intensive workloads isn’t feasible due to the costs of processing the volumes of data. Tackling the service provider scalability problem requires some creative engineering.

When discussing what we’re building with others, I like to use the phrase “Russian nesting dolls” (Matryoshkas). Nested control planes allow us to push the power of Kubernetes into our customers’ environments while removing the unique “snowflake” infrastructure issues. Kubernetes abstracts these issues while enabling us to use a DevOps methodology for deploying software.

What if we could extend this multi-tenant idea to using Kubernetes as a Service inside Kubernetes? I thought you’d never ask… Nested Kubernetes control planes deployed as Pods on a management cluster with the ability to deploy workloads at the edge inside our customers’ environments is something up and coming in the Kubernetes ecosystem.

Codename: Bulkhead

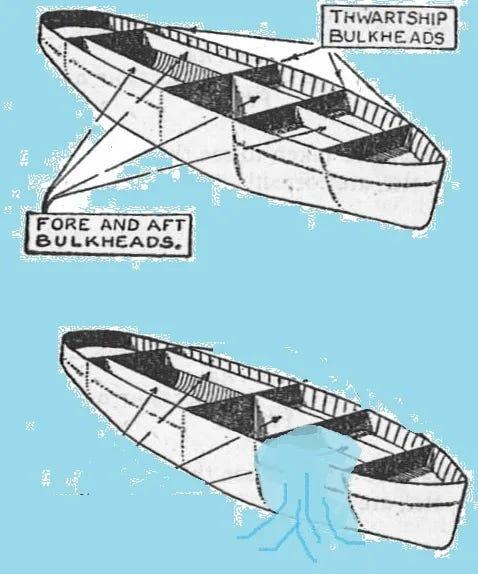

We’re not sailing the RMS Titanic here, but we can still rationalize this nested control plane architecture similarly. Any issues are isolated to a single “bulkhead,” preventing them from spilling into neighboring deployments or customers. Aptly, this is also why we’ve #codenamed our service “Bulkhead.”

Bulkheads are watertight compartments inside a ship. In the event of an incident, problems are contained to the affected bulkhead(s), preventing a cascading failure and resulting in a ship sinking.

We’ve taken inspiration from a similar project called Kamaji and its bulkhead-like architecture, but we’ve decided to build our product from the ground up using components of the k3s project.

Kubernetes allows us to implement our vision of an Infrastructure as Code-backed service with Managed Services deployed, maintained, upgraded, and decommissioned using tools like Terraform/Tofu, Pulumi, Ansible, and some good old-fashioned YAML manifests.

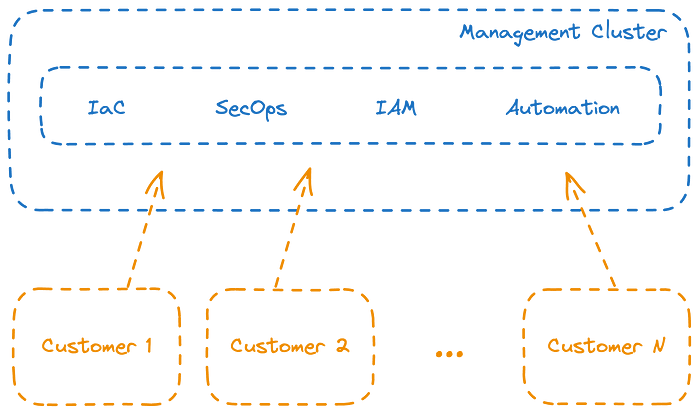

In this architecture, the management cluster backs workloads requiring state but intensive compute and networking workloads are pushed to the edge in a customer bulkhead giving our customers flexibility and control over where these resources run.

Extending the management cluster idea, as a Managed Service Provider, we can use this environment to deploy services like Cribl Leaders, which don’t require intensive compute or networking requirements, while deploying Cribl Worker Nodes in each customer bulkhead. Infrastructure as Code ensures consistency across all managed resources while reducing the operational burden associated with platform administration.

Kubernetes and the Future

While Kubernetes is a proven platform elsewhere, it has the potential to significantly reshape the Managed Services world. Customers realize faster time-to-value in their services and spend less time installing software and troubleshooting issues. Getting right to using the service in hours or days instead of weeks or months keeps management happy and consultants billable, and solving customer problems with less frustration.

Kubernetes at the Edge is an extensible platform that allows for growth. It empowers customers to solve complex problems, leveraging the pooled resources of a Managed Service Provider instead of needing to hire multiple experts on specific platforms.

Are you on a journey to enhance your observability ? We are here to help and would love to hear from you! We are currently offering Managed Cribl on our platform and plan to expand our portfolio to include additional software in the future. If you’re ready to get started with Cribl contact us to start the conversation and we will support you in any way we can.

Like to solve significant challenges? We’d love to hear from you if you want to build an innovative platform! I am hiring SREs (Site Reliability Engineers) and Developers with a background in Cloud, Kubernetes, Terraform, Ansible, JavaScript, and Observability tools like Cribl and Grafana. We’re also hiring Cribl and Splunk Consultants to help our customers with their observability platforms.